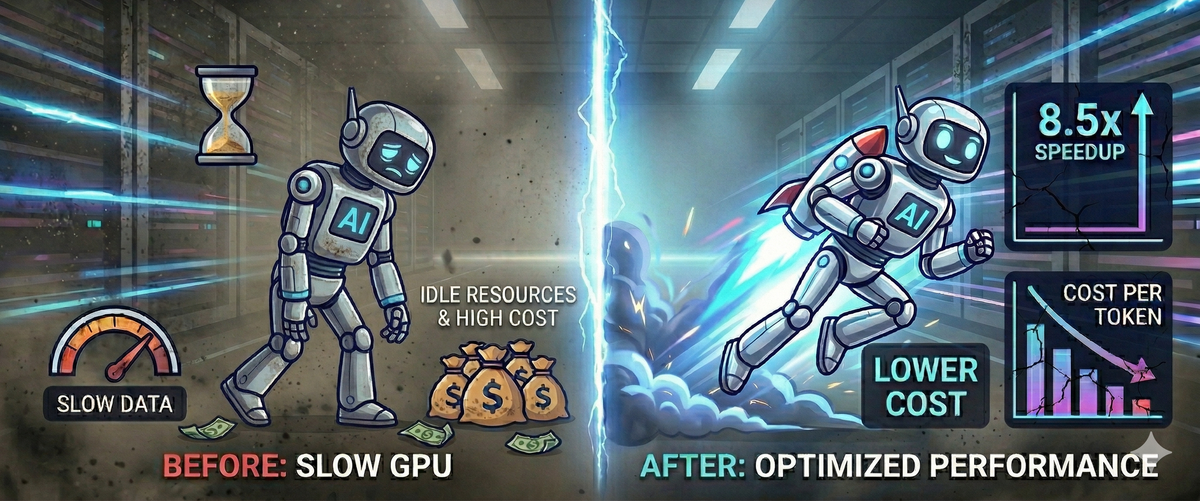

Your AI Is Slower Than It Needs to Be — Here's Why, and What We Did About It

Standard AI inference leaves most of your GPU sitting idle. Discover how we built a custom GPU attention kernel that fixed memory bottlenecks, unlocking an 8.5× speedup without changing model or hardware.

How a single engineering project cut the cost of running large language models by over 8×.

Every company running an AI assistant, a chatbot, or a document analysis tool is paying for GPU time. Every second a model takes to generate a response is a second of compute you are billed for. Most teams accept this as the cost of doing business with AI.

It does not have to be this way.

We recently completed a project that reduced the time it takes an LLM to generate each token by 8.5× without changing the model, without upgrading the hardware, and without touching the product at all. The only thing we changed was how the GPU is used.

The Problem Most People Don't Know Exists

When a language model generates text, it does not produce the full response at once. It generates one word (or token) at a time, and each token requires its own computation pass. This is called the decode phase, and it is where most of your inference cost lives.

A standard, naive PyTorch implementation leaves the vast majority of the GPU sitting idle. On a typical NVIDIA GPU, standard attention uses less than 5% of the available memory bandwidth. The rest of the hardware is waiting. You are paying for a full GPU and using a fraction of it.

Why? Because the standard implementation writes intermediate results to slow GPU memory three times per token — scores, probabilities, and output — then reads them back each time. On a GPU where memory runs at 320 GB/s, those unnecessary round-trips are the entire bottleneck. The math itself takes almost no time.

What We Built

We built a custom GPU attention kernel, the core computation that runs inside every transformer model during inference that eliminates those unnecessary memory operations entirely.

Instead of three writes and three reads per token, our kernel does everything in a single pass:

- Loads the query vector once into the fastest on-chip memory

- Streams the conversation history through in small tiles

- Computes the full attention result entirely in registers — never writing anything intermediate to slow memory

- Writes the final output exactly once

The result on an NVIDIA T4 GPU:

| Standard PyTorch | Our kernel | |

|---|---|---|

| Effective bandwidth used | 16–17 GB/s | 136 GB/s |

| Speedup per token | 1× (baseline) | 8.5× |

| GPU memory ceiling | 320 GB/s | 320 GB/s |

Same GPU. Same model. 8.5× more efficient use of the hardware.

Going Further: Flash-Decoding for Long Conversations

The gains compound further when conversation history is long. A standard attention kernel assigns one worker per attention head — for a 12-head model, that means 12 workers on a 40-SM GPU. 28 out of 40 processing units sit idle for every single token generated.

We implemented Flash-Decoding, a technique that splits the conversation history across all available processing units simultaneously. For a 12-head model with 8 history chunks, we launch 96 parallel workers instead of 12 keeping all 40 SMs active.

At 8,192 tokens of context (a long document or extended conversation), this adds a further 26% improvement on top of the base kernel gains:

| Context length | Standard PyTorch | Our kernel | Flash-Decoding |

|---|---|---|---|

| 512 tokens | 1× | 1.7× | — |

| 2,048 tokens | 1× | 3.4× | — |

| 4,096 tokens | 1× | 4.1× | 5.1× |

| 8,192 tokens | 1× | 6.5× | 8.2× |

The longer the conversation, the bigger the advantage.

What This Means in Practice

Faster responses

Token generation time drops in direct proportion to the speedup. An 8× improvement means a response that previously took 4 seconds now takes under half a second. For customer-facing applications, that is the difference between a product that feels responsive and one that feels slow.

Lower compute cost

If you are running on cloud GPUs AWS, GCP, Azure etc., you pay by the hour. An 8× improvement in inference throughput means you serve 8× more requests on the same hardware. Alternatively, you run the same workload on one-eighth the GPUs, cutting your inference bill by roughly 85%.

No model changes required

This is an infrastructure-level optimization. The model weights, the outputs, the product nothing changes. Users see faster, cheaper responses. The AI produces identical results.

Scales with your context length

The more conversation history your application retains, the bigger the efficiency gain. Applications with long documents, extended customer conversations, or retrieval-augmented generation pipelines benefit most.

How It Compares to Standard Solutions

The techniques we implemented are the same ones used inside vLLM, HuggingFace TGI, and the FlashAttention library — the production inference engines that power most commercial LLM deployments.

| Our implementation | Industry equivalent |

|---|---|

| Fused single-pass attention | FlashAttention (standard in all major inference frameworks) |

| In-kernel position encoding | vLLM, HuggingFace TGI |

| Flash-Decoding for long context | Flash-Decoding (Tri Dao, Stanford, 2023) |

The difference is that we build and tune these kernels directly for your hardware configuration, model architecture, and deployment constraints rather than relying on general-purpose library defaults that may not be optimized for your specific use case.

What We Deliver

A custom inference kernel, validated against your existing model, benchmarked on your target hardware, and integrated into your serving stack. Specifically:

- Profiled baseline — we measure your current inference cost accurately, including identifying any measurement artifacts that inflate apparent performance

- Fused attention kernel — custom-built for your model architecture and GPU fleet

- Flash-Decoding where applicable — for workloads with long context windows

- Verified correctness — outputs match the original model exactly (validated to <0.01 tolerance)

- Benchmark report — showing before/after throughput and latency at your operating context lengths

Is This Right for You?

This work is most valuable when:

- You are running LLM inference at scale and GPU cost is meaningful

- Your application involves long conversations, long documents, or extended context

- You are using a transformer model for generation (GPT-style, LLaMA, Mistral, and similar architectures)

- You want to serve more users without scaling your GPU fleet

If you are generating fewer than a few thousand tokens per day, the economics may not justify a custom kernel. If you are running continuous inference at scale, the return is immediate and compounding.

The Short Version

Standard AI inference is wasteful by default. The GPU sits mostly idle because the software writes unnecessary data to slow memory and then reads it back. We fix this at the kernel level — the lowest layer of the software stack — using techniques proven in production at the largest scale AI systems in the world.

The result: the same model, the same GPU, generating tokens 8× faster and at 8× lower cost per token.

Interested in understanding what this could mean for your specific workload? We are happy to run a benchmark against your current setup and give you concrete numbers before any commitment.